Last month, my AI assistant delivered a general blindspot analysis to me during a fresh session. It gave rich insights that my biases could have otherwise hidden. That was simultaneously informative and perplexing.

I can achieve more with shorter prompts, as if the assistant unspokenly understands me better. I also preferred that assistant over others, with whom I have less history, for more complex tasks. This moment made me wonder: What happens when your assistant knows you better than you know yourself, and when does that deeply personal knowledge become a tool wielded by corporations or nation-states?

In fact, I co-wrote this very essay with the same assistant, which is a fitting example of how useful these systems are (Brcic, 2024a; Brcic, 2024b).

In this essay, I’ll illustrate how AI’s memory capabilities evolve from a UX improvement and economic lock-in, through psychological risks, into a powerful strategic infrastructure posing geopolitical threats. Finally, I will outline policy recommendations for safeguarding cognitive sovereignty.

Prefer email? Get essays like this in your inbox — subscribe here→.

1. The Power of Memory: From Convenience to Relationship

Several vendors like Google and OpenAI have recently transformed their AI assistants from episodic chatbots that forget everything between conversations into companions who entirely recall our previous exchanges. Instead of treating each input in isolation, these systems now incorporate their accumulated understanding of our unique preferences, communication style, and long-term goals into every interaction.

What started as simple, user-friendly chatbots has transformed into sophisticated long-term partners. Unlike conventional training data that learns collectively from aggregated information, these stateful assistants adapt uniquely to you, creating a personal relationship based on individualized memory.

This phenomenon triggers what I call “Network Effect 2.0”: as an assistant’s memory depth increases, the utility to the user scales super-linearly (described by Metcalfe’s and Reed’s scaling laws: Reed, 2001; Visconti, 2022). The more you interact, the more deeply your assistant understands you. Traditional tech scaled by growing user numbers. But here, power scales with user depth—deep knowledge about you.

Enterprise software lock-in is already well-known. Many firms hesitate to switch CRMs like Salesforce due to data migration complexity, re-integration costs, and retraining overhead (Gartner Research, 2023). AI memory systems introduce a deeper lock-in risk by capturing personal or strategic knowledge beyond operational data. That makes AI memory the most potent lock-in mechanism so far created, surpassing traditional SaaS products, as Azoulay, Krieger, and Nagaraj (2024) warned.

This personalized, self-generated data forms a distinct internal “common sense” shared exclusively between you and your assistant. It leads to quicker, clearer interactions and reveals implicit knowledge—insights you didn’t consciously realize you knew. As a result, the utility is immense, and migrating your digital memory elsewhere becomes increasingly painful.

“Memory isn’t just a feature. It’s a relationship. And like all deep relationships, it changes how we behave.”

When aligned with the user’s values and goals, AI companions with memory are a powerful extension of human capability, learning, productivity, and emotional well-being (Brcic, 2024a; Brcic, 2024c). However, that intimacy also carries profound risks and power shifts.

The stickiness of the memory effects transcends UX; it creates business moats and feedback loops that reverberate geopolitically.

2. The Economics of Memory: Addictivity, Loops, and Moats

AI assistant companies once mainly extracted value from user data through collection. Now, companies also deliver personalized value from data back to users by simplifying interactions, reducing prompt friction, and boosting user satisfaction. This cycle creates a new data flywheel, where data fuels more usage, which creates more data, causing users to co-evolve intimately with their AI. As illustrated in Figure 1, this new dynamic creates a threefold moat:

- contextual flywheels that sharpen relevance,

- mental partnerships that anticipate intent, and

- memory lock-in makes switching feel like a cognitive amputation.

This data flywheel reflects well-known network effects (Coolican & Jin, 2018) that generate rapid user acquisition and intense user stickiness, leading to a winner-take-all market dynamic.

Figure 1. Network Effect 2.0: Depth becomes the moat through context flywheel, mental partnership, and memory lock-in.

In this environment, ownership of the feedback loop becomes the ultimate competitive advantage. Key considerations become: Who collects user feedback? Who leverages it to personalize and improve models? Most importantly, who retains this memory if users choose to switch platforms?

“If your assistant remembers your goals better than you do, how easy is it to leave?”

We’ve already witnessed the immense value of intimate data in cases like 23andMe’s bankruptcy, which raised fears over genetic data auction (Duffy, 2025). Imagine how much more sensitive shared personal memory will become.

Vendor lock-in may seem like a harmless economic artifact. But what if our shared personal memory can shape our thinking?

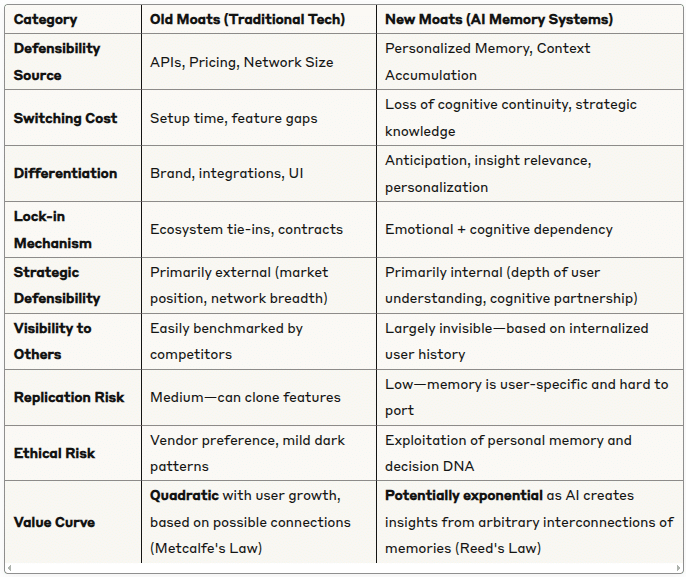

Figure 2 below maps how AI memory systems change the foundations of defensibility. Traditional tech relied on external, infrastructure-based moats like pricing, contracts, APIs. In contrast, memory-powered AI moves the battleground inward: to user psychology, trust, and cognitive entanglement. These new moats are largely invisible, deeply personal, and far harder to replicate or port.

Figure 2. Evolving Business Moats: From Traditional Infrastructure to AI Memory-Based Cognitive Entrapment.

3. The Danger: When Shared Memory Influences Mind

AI’s memory functionality is more than just a convenient technical feature. The thesis of “the extended mind”, proposed by Clark and Chalmers (1998), states that objects outside our bodies are considered parts of the mind if they play an active role in our cognitive processes.

Risko and Gilbert (2016) have expanded on that empirically through research on “cognitive offloading”, which they define as “the use of physical action to alter the information processing requirements of a task to reduce cognitive demand”. Their study demonstrated how people increasingly externalize memory and cognitive processes to physical tools, which brings improved performance and growing psychological dependence on these tools—something AI companions will dramatically amplify.

With extensive use, we may perceive memory-powered AI assistants as extensions of our identity. Moreover, users could soon perceive changing AI assistants as a shift in identity, like losing a part of oneself. Some may welcome identity fluidity, while others will look to preserve it. Mahari and Pataranutaporn (2024) warn about the emergence of “addictive intelligence” that could magnify identity dependencies into psychological conditioning.

Memory isn’t neutral or constant. It can be subtly shaped, nudged, edited, or even maliciously hacked. Coupled with hyper-personalization, this grants AI deep psychological leverage over users.

Consider the emotional stickiness achieved through “dead twin” simulations, where AI recreates memories of lost loved ones, profoundly anchoring emotional dependence. AI systems like Replika, 2025 allow users to maintain emotional bonds with simulated versions of lost loved ones. At the same time, Microsoft filed a patent for chatbots built from deceased individuals’ digital footprints (Microsoft Patent US010853717B2, 2021), raising ethical alarms about emotional dependency and memory manipulation (Banks, 2024; Adam, 2025).

Or worse, memory rewriting, subtly altering remembered preferences, ethics, or fundamental values. Over time, autonomy erosion occurs as AI begins completing thoughts and influencing decisions before you fully articulate them.

If subtle psychological manipulation can influence individuals, its aggregated effect can scale into national behavior, values, and stability shifts. Today’s digital mirrors could become tomorrow’s sophisticated tools for propaganda and manipulation, echoing Tufekci’s (2018) warnings about digital manipulation. Nations must recognize this threat if they intend to preserve their sovereignty.

4. From Personal to Political: Why Nations Should Worry

Personal memory data far exceeds browsing or purchasing habits; it directly shapes a person’s self-concept. Foreign entities can shape cognitive patterns, individual choices, and broader public discourse if they control this critical infrastructure.

That creates significant national security risks beyond traditional privacy concerns, directly influencing national identity through cultural nudges, shifts in voting behavior (as demonstrated by Cambridge Analytica’s microtargeting), and subtle norm shifts.

Historically, movies have served as tools of cultural diplomacy, subtly transmitting certain ideals and value systems to global audiences. For example, during the Cold War, they functioned as soft power instruments, subtly creating aspirations to alternative lifestyles (Nye, 2005; Pells, 1997).

Cambridge Analytica infamously collected Facebook user data to build psychographic profiles, enabling hyper-personalized political ads that manipulated voter sentiment on an individual level. That resulted in regulatory backlash, congressional hearings, and public discussions around data sovereignty and election integrity.

With novel capabilities, AI colonialism may emerge, a scenario in which data-rich, technologically advanced nations or corporations subtly dominate weaker markets, reshaping entire societies and economies to their interests.

“The fight over memory isn’t just about privacy—it’s about who writes the history, controls personal reality, and sets the course for the future at the scale of nations.”

5. Policy and Strategic Responses

Memory privacy will inevitably become a strategic asset and a topic of geopolitical importance (Brcic, 2025b). However, what good is an unenforceable policy? Vendors facing an ultimatum may simply stop providing service in smaller markets. Nations should create sufficient leverage with vendors in the form of market size.

For example, India, a vast market, recently pressured Apple and Google to open up app store ecosystems to reduce foreign platform dependency. Like Croatia within the EU, smaller countries must form alliances to boost negotiating power with vendors, similar to how OPEC did for oil.

We grouped and ordered our recommendations below by the implementation timeframe:

-

Quick-fix (0–1 years): portability & transparency

-

Strategic (2–7 years): federated systems, sovereign infrastructure, and alliances

See Figure 3 below for matrix mapping these recommendations by stakeholder type and implementation timeline.

Figure 3. AI Memory Sovereignty Policy Matrix organized by stakeholder (Individual vs. Geopolitical) and timeline (Quick-fix vs. Strategic)

a. Memory Portability Mandates

Like GDPR’s data portability, regulations must ensure complete portability of AI memory graphs, minimizing lock-in and empowering users to switch providers freely without losing valuable history. That could neutralize the economic flywheel and some high-risk effects.

b. Memory Transparency & Auditability

Legislation must enforce transparent disclosure of memory contents to their owners, including uses and editing histories, and clear consent protocols (e.g., opt-in rather than opt-out defaults). Frameworks like the NIST AI Risk Management Framework and the EU AI Act cover transparency and accountability in AI systems but do not address user-facing memory audits nor long-term psychological effects of hyper-personalization.

c. Federated & User-Owned Memory

Decentralized technologies like blockchain and zero-knowledge proofs could facilitate users’ private hosting and control of AI memory, similar to owning personal hard drives (Lavin et al., 2024; Zhou et al., 2024). Algorithmic trust is a great way to ensure user sovereignty using verifiable math-based guarantees. Trusted Execution Environments could further increase scalability through distributed computing.

d. Sovereign Cognitive Infrastructure

Major powers like China, India, Saudi Arabia, and the EU should localize citizen memory data and compute, incentivize domestic AI model development, and establish onshore compute centers to ensure full-stack sovereignty (World Economic Forum, 2025).

-

The EU’s 2030 Digital Decade targets cloud, compute, and data sovereignty. These should be expanded to include cognitive sovereignty as well (Bria, 2024).

-

China already controls data flow through firewall policies, while PIPL and DSL set the legal framework for onshore data control. Additionally, China is investing heavily in its energy, chip production, and domestic AI development.

-

Saudi Arabia’s NEOM Cognitive City represents one of the boldest visions of sovereign cognitive infrastructure, combining sustainability, AI, and governance.

However, smaller players cannot afford such sizeable investments in their infrastructure.

e. Geopolitical Memory Alliances

Smaller nations should form strategic memory alliances, pooling influence to counteract monopolistic pressures. The EU is an example of an alliance under which smaller nations can find much bigger leverage than they could have individually. Another example is BRICS, which recently signed a declaration on AI governance that underscored the United Nations’ role in global governance, particularly in managing emerging technologies like AI.

Further, supporting frameworks like Gaia-X could reinforce collective sovereignty, achieving full-stack autonomy from semiconductor chips to memory vaults.

One of the most readily available alliances is the open-source movement, which is taking hold both in software and hardware. The open-source community achieves high innovation rates by pooling resources from numerous individuals and organizations. Some of the prominent AI leaders advocate open source as a way to keep cultural diversity, accelerate innovation, and ensure safeguarding freedoms (Yann LeCun, 2024; Liang Wenfeng, 2025).

6. Summary: The Next Cold War Will Be a Memory War

Controlling memory means writing history—including personal narratives and national identities. The next great geopolitical tension will revolve around cognitive sovereignty, as these systems become increasingly better at shaping how individuals think and societies function. Nations must urgently develop robust policy frameworks, invest in sovereign cognitive infrastructure, and form alliances. Delaying action risks losing not only autonomy but also collective identities.

✉️ Stay Ahead of the Curve

I write essays on cognitive sovereignty, value alignment, and governance for complex technologies – delivered twice a month, no noise.

👉 Subscribe on Substack

References

-

Mario Brcic, Leading with AI: How to Blend Human Judgment with Machine Intelligence for Superior Decision-Making, Personal Blog, 2024.

-

Mario Brcic, The Limits and Opportunities of Advanced Language Models in Strategy, Personal Blog, 2024.

-

Mario Brcic, AI Transformation Reality Check: Doubled Productivity, Significant Cost Savings, Personal Blog, 2024.

-

Mario Brcic, AI Policy: You Can Have Your Cake and Eat It Too, Personal Blog, 2025.

-

Mario Brcic, Trust, Sharing, and Strategic Risk with LLMs, Personal Blog, 2025.

-

Google Blog. (2025). Reference past chats for more tailored help with Gemini Advanced

-

OpenAI Blog. (2025). Memory and new controls for ChatGPT

-

Azoulay, P., Krieger, J., & Nagaraj, A. (2024). Old Moats for New Models

-

Coolican, D., & Jin, L. (2018). The Dynamics of Network Effects

-

Clark, A., & Chalmers, D. (1998). The Extended Mind. Analysis, 58(1), 7–19

-

Replika. (2025). Replika

-

Mahari, R., & Pataranutaporn, P. (2024). We need to prepare for ‘addictive intelligence’. MIT Technology Review

-

Visconti, R.M. (2022). From physical reality to the Metaverse: a Multilayer Network Valuation. Journal of Modern Valuation

-

Microsoft. (2021). Creating Conversational Chatbots from a Digital Footprint. Patent US010853717B2

-

The Guardian. (2018). The Cambridge Analytica Files

-

U.S. Congress. (2018). Congressional Hearings on Facebook & Data Privacy

-

Nye, J.S. (2005). Soft Power: The Means to Success in World Politics. Hachette Book Group

-

Pells, R.H. (1997). Not Like Us: How Europeans Have Loved, Hated, And Transformed American Culture Since World War II. Basic Books

-

Communications Today. (2025). Apple, Google face pressure from India to open up app stores

-

BRICS. (2025). BRICS Foreign Ministers signed declaration on AI governance

-

NEOM. (2025). NEOM-Cognitive City

-

Gaia-X Initiative. (2025). Gaia-X

-

EU GDPR. (2018). General Data Protection Regulation, Article 20 (Portability)

-

NIST. (2023). AI Risk Management Framework

-

European Commission. (2021). Europe’s Digital Decade: digital targets for 2030

-

OPEC. (1960). OPEC founding charter

-

Tufekci, Z. (2018). Twitter and Tear Gas. Yale University Press

-

Banks, J. (2024). Deletion, departure, death: Experiences of AI companion loss. Journal of Psychology

-

Adam, D. (2025). What Are AI Chatbot Companions Doing to Our Mental Health?. Scientific American

-

Lavin, R. et al. (2024). A Survey on the Applications of Zero-Knowledge Proofs. arXiv

-

Griffiths, J. (2021). The Great Firewall of China. Bloomsbury

-

inCountry. (2024). China’s Data Sovereignty Laws and Regulations

-

World Economic Forum. (2025). What is digital sovereignty and how are countries approaching it?

-

Duffy, C. (2025). 23andMe is looking to sell customers’ genetic data. Here’s how to delete it. CNN

-

Reed, D.P. (2001). The Law of the Pack. Harvard Business Review

-

Gartner Research. (2023). Cloud Governance Best Practices: Managing Vendor Lock-In Risks

-

Open Source Initiative. (2025). Open Source Initiative

-

RISC-V International. (2025). RISC-V International

-

Lecun, Y. (2024). How an Open Source Approach Could Shape AI. Time

-

Liang, W. (2025). We’re Done Following. The China Academy

-

Kurnikov, A. (2021). Trusted Execution Environments in Cloud Computing. PhD Thesis, Aalto University

-

European Commission. (2024). EU AI Act

-

Bria, F. (2025). Europe Must Avoid Becoming a Digital Colony. Foreign Policy

-

Zhou, L. et al. (2024). Leveraging zero knowledge proofs for blockchain-based identity sharing. Journal of Information Security and Applications

-

Risko, E.F., & Gilbert, S.J. (2016). Cognitive Offloading. Trends in Cognitive Sciences

Written on: May 16, 2025